The Hidden Cost of Every AI Prompt You Send

A few weeks ago, a Substack writer ran some numbers on AI and the environment. The community corrected him publicly. What came out of that thread was one of the most useful reframes I have read on the AI boom - not because it was doom and gloom, but because it was honest in a way the industry never is.

Here is what those numbers actually say, what they leave out, and why any freelancer or creator building a career on AI tools right now should care deeply.

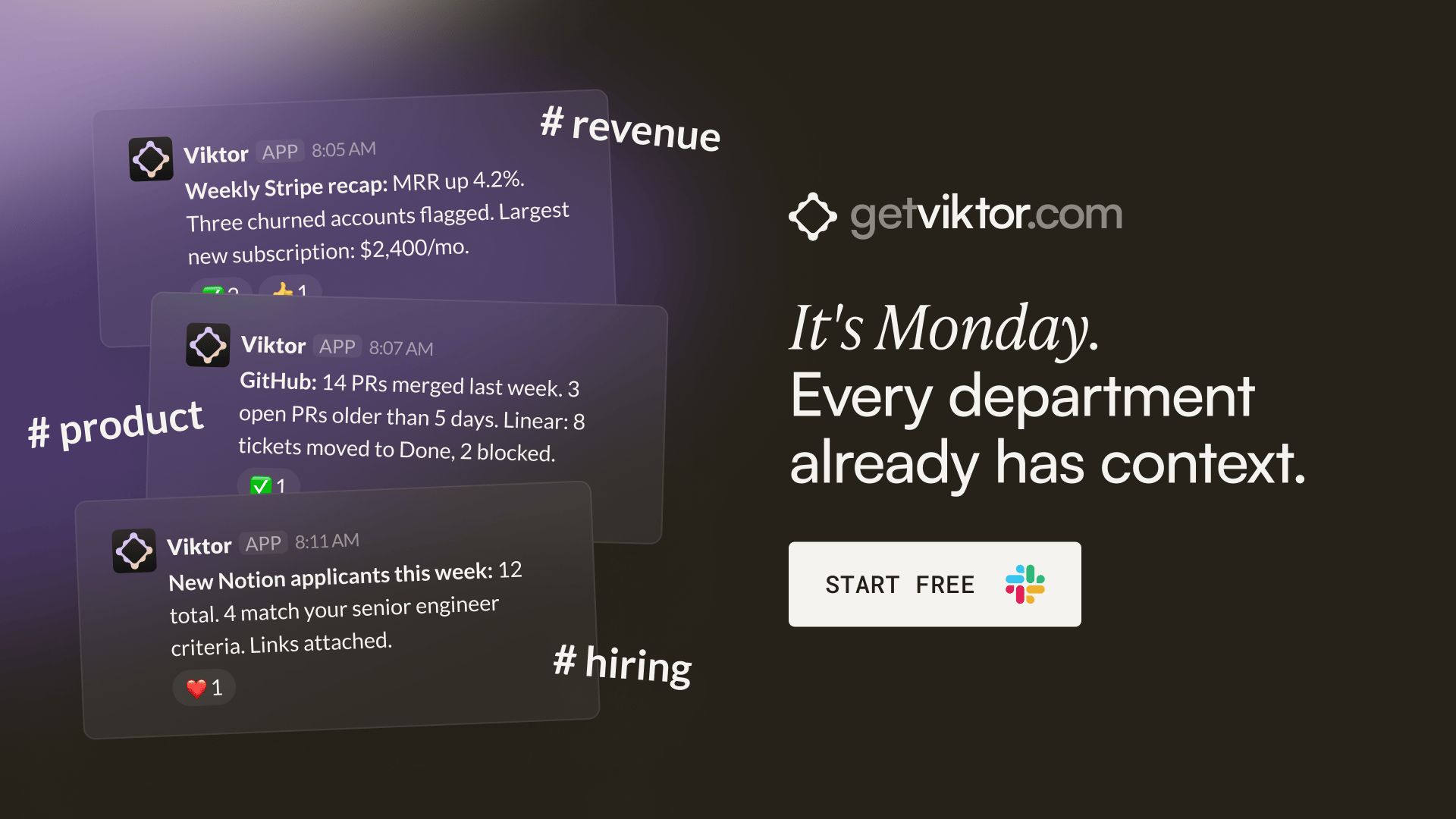

It's Monday. Every department already has context. Nobody prepped anything.

Your CFO opens Slack. There's a weekly Stripe revenue recap in #finance with a churned-accounts flag and a net-new breakdown. She didn't ask for it.

Your head of product opens Slack. There's a GitHub summary in private channel: PRs merged, PRs stale, Linear tickets that moved. He didn't ask for it.

Your marketing lead opens Slack. There's a Google Ads performance comparison in private channel, with a note: "Meta CPA crept up 18% this week. Might be worth pausing the broad match campaign." She didn't ask for it either.

All-hands at 10am. Everyone already knows the numbers. The meeting is about decisions, not catch-up.

That's what happens when one colleague works across every tool your company uses. Not one department's assistant. The whole company's coworker.

Viktor lives in Slack. Top 5 on Product Hunt, 130 comments. SOC 2 certified. Your data never trains models.

"Not only have we caught up on several months of work, we are automating manual tasks and expanding our operations to things previously not possible at scale." - Jesse Guarino, Director, Torque King 4x4

Training vs. Inference: The Stat Everyone Gets Wrong

You have probably seen the comparisons. Training GPT-3 used about 5.4 million litres of water. California almond farms use 1 to 2 trillion litres a year. On that arithmetic, one day of almonds could train GPT-3 hundreds of thousands of times over.

So far, so manageable. But that comparison breaks down the moment you stop looking at training and start looking at inference - the process of the model actually answering you each time you hit send.

Training happens once. Inference happens 800 million times a week and counting.

ChatGPT alone has roughly 800 million weekly users. OpenAI's own published figure puts water use at around 0.3 ml per query. Researchers put the real figure five to fifty times higher when off-site electricity cooling is included. Run the maths across typical usage and you get a range of 4.4 to 44 billion litres of water consumed per year - just from ChatGPT, on conservative assumptions.

The industry talks about training because training is the defensible story. It is bounded. Inference is not.

The Numbers the Companies Choose Not to Report

Carbon footprints are reported in three layers. Scope 1 is what a company directly emits on site. Scope 2 is the electricity it buys. Scope 3 is everything else - supply chains, hardware manufacturing, construction.

Microsoft's 2024 Environmental Sustainability Report shows Scope 3 emissions up 30.9 percent from their 2020 baseline. Their own explanation points to datacentre construction and the embodied carbon in semiconductors, servers, and racks.

For some scale: if 800 million ChatGPT users each generated one AI image in a single round, researchers estimate the electricity cost would produce roughly 3,700 tonnes of CO2. That is the same as 2,500 average UK cars driving for a year - for one round of images.

The UK government originally projected AI datacentre emissions at 0.05 percent of Britain's total carbon. After a Carbon Brief investigation last month, the revised figure is 34 to 123 million tonnes of CO2 by 2035. That is hundreds of times higher than the original estimate.

The pattern is consistent. Companies report what can be framed as achievement. They omit what can only be framed as cost.

What This Actually Means If You Build Online

Here is the part that most AI newsletters skip over: a meaningful share of the compute being built for AI inference is being used to generate content no one asked for, read by no one, remembered by no one. AI-written LinkedIn posts summarised by other AI tools for audiences that do not exist. Automated email sequences for cold outreach no one opens. Blog content produced at volume with zero genuine insight behind it. If you are a freelancer, creator, or founder competing in any of those spaces right now, you are up against a machine that is spending real resources - water, energy, capital - to produce noise. That is actually good news for you, if you understand what it means.

The real competitive edge in 2026 is not volume. It is the ability to produce work that a reader can tell was made by a human who thought carefully. That gap is widening, not closing.

Every major compute deal being signed right now - including Anthropic's multi-gigawatt agreement with Google and Broadcom scheduled to start in 2027 - is being built to serve inference demand at scale. That infrastructure exists to handle the volume of synthetic content. It is not being built for the thoughtful 500-word piece that actually converts a reader into a client.

Four Things Worth Doing Differently

Default to smaller models. Claude Haiku instead of Opus. GPT-5-mini before GPT-5. The free tier before the paid one. Reserve large frontier models for tasks that genuinely need them. The smaller model is cheaper, faster, and lighter on resources.

Ask before you generate. Every prompt has a cost. Before you run it, ask whether the output will be read, used, or remembered. If the honest answer is no, skip it. This is also just better creative discipline.

Ask what your employer or client is procuring. If your company or a client is buying AI at scale, ask what the environmental audit shows. Making this a standard procurement question is how the conversation changes at the institutional level.

Watch the policy vehicles. In the UK, the AI Regulation Bill is the live vehicle. In the US, it is the Artificial Intelligence Environmental Impacts Act in the Senate. Per-query energy and water disclosure by region is the specific ask worth pushing for.

The Honest Takeaway

None of this means stop using AI tools. The researcher who wrote the original analysis made that point clearly. Disabled users for whom these tools remove real barriers that traditional infrastructure never addressed are the last people anyone should blame for a system they did not build.

The structural argument is with the companies that will not disclose the numbers, the planning regimes that allow water-stressed regions to absorb datacentre buildouts without community input, and the reporting frameworks that treat Scope 3 as optional.

For anyone building a career online, the clearest practical conclusion is this: the volume play is funded and resourced by infrastructure you cannot compete with. The quality play is yours to own. The machines are extraordinarily good at producing a lot. They are still not good at caring whether it matters.